The Chatbot Moment

The Chatbot Moment

When AI Became Someone To Talk To

It’s November 30, 2022, at 2:47 PM, in a college dorm room in California. Sarah stares at her laptop screen. She's been stuck on a calculus problem for forty minutes. The problem is the derivative of a composite function, something about the chain rule, and the textbook explanation might as well have been written in a foreign language. It was all Greek to Sarah.

She heard about this new thing called ChatGPT, some kind of AI chatbot her roommate mentioned at lunch. She thought she would give it a try.

She types: "I don't understand the chain rule. Can you explain it like I'm five?"

The response appears in seconds:

"Imagine you have a box inside another box. The chain rule is about opening both boxes. If the outer box is twice as big as what you put in, and the inner box is three times as big as what you put in, then opening both boxes makes things 2 × 3 = 6 times bigger..."

Sarah reads it again and again. It continues with examples and makes adjustments whenever she asks follow-up questions. ChatGPT catches her mistakes gently and explains things using different terms when she's confused and provides concrete examples. Thirty minutes later, she understands the chain rule completely.

Something shifts in her mind. This isn't a tool. This is... someone explaining things to her. Someone patient, someone who doesn't judge her for not understanding it the first time. Someone who will take as much time as necessary. She opens a new chat.

"Can you help me write a cover letter for an internship?" It can.

"I'm feeling anxious about my exams. Can we talk about it?" It does.

By midnight, Sarah has had seventeen conversations with ChatGPT. She's not alone. Across the world, millions are having the same experience. The chatbot moment has arrived, and nothing will ever be quite the same.

🤔 The Screen That Listens

For forty years, computers spoke a language humans had to learn. If you wanted to use a computer, then you had to learn the command line. It went something like this:

cd /users/documentsls -lamkdir newfolder

Too hard? Fine, learn how to use the GUI:

- Click File

- Navigate to Save As

- Choose format from dropdown

- Remember where you saved it

- Don't forget the file extension

Still too much? Use an app:

- Download from the app store

- Create an account

- Verify your email address

- Accept the terms (you'll never read)

- Grant permissions

- Learn the interface

- Navigate the menus

- Remember where features are located

Every interaction required that you adapt to the machine. The computer didn't speak human, you spoke computer.

The Natural Language Breakthrough

Then ChatGPT came along and changed the equation with natural language processing. You want something? Just ask.

- "Summarize this article" → It does.

- "Write me a poem about my dog" → It does.

- "Explain quantum mechanics" → It tries.

- "Help me understand my emotions" → It listens.

- “Tell my boss what I really think of him” → It roasts.

- “Honor my grandma’s cat” → It memes.

There are no forms, no menus, and zero learning curve. Just... conversation. The interface isn't buttons or icons or commands. The interface is language itself, what humans have been using for 50,000 years. After 50,000 years of practice, we finally built something that can do our oldest trick better than we can.

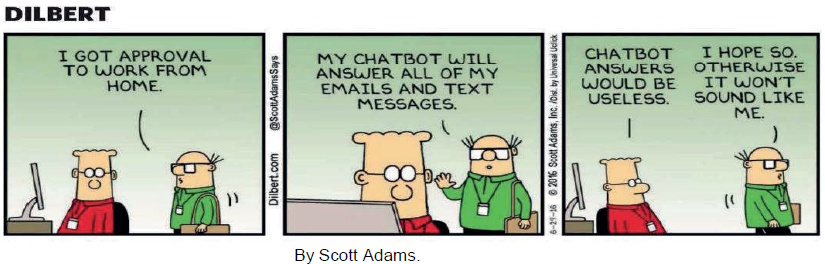

This is the revolution: it’s not that AI got smart, AI became accessible.

![]()

Suddenly Everyone Had a Genius in Their Pocket

Before ChatGPT:

- Want to build a website? Learn HTML, CSS, JavaScript. Or hire someone for thousands.

- Want legal advice? Pay a lawyer hundreds of dollars an hour. Pray the lawyer is good.

- Want to learn calculus? Enroll in a course. Hope the professor is good. And available.

- Want therapy? Find a therapist. Wait weeks for an appointment. Pay hundreds per session.

After ChatGPT:

- "Help me build a website for my bakery."

- "Is this contract fair?"

- "Teach me calculus."

- "I'm struggling with anxiety."

Want to do literally anything? Type a sentence. Get a therapist, lawyer, tutor, coder, and life coach in one reply plus a free hallucination about your childhood trauma.

It’s not perfect, and it’s not completely professional, but it is readily accessible, immediate, and free. Now available in every timezone, never takes a day off, and still cheaper than your last haircut.

The distribution of capability just changed forever. We can now confess whatever we please, without having to worry that someone is going to read the diary… although, beware of the server logs.

😀 When "It" Started Feeling Like "You"

Something strange started happening in the first few weeks after ChatGPT was released. People weren't calling it "the AI" or "the chatbot" or “Karen.” They were calling it... names.

- "I asked ChatGPT to..."

- "ChatGPT told me..."

- "ChatGPT helped me…"

- "ChatGPT misled me..."

People didn’t say "it analyzed," rather "ChatGPT said." Not "the system output" but "ChatGPT suggested." They were talking about it like a person.

Anthropologists, psychologists, and philosophers took note. Users didn't care, because the experience felt like they were finally talking to someone.

Anthropologists wrote 400-page papers. Psychologists scheduled emergency panels. Philosophers had a collective existential breakdown. Meanwhile, users were saying, “finally someone who listens without checking their phone, judging my diet, or saying ‘we need to talk.’”

The ELIZA Effect

In the mid-60s MIT Professor Joseph Weizenbaum introduced ELIZA, the first chatbot. He created a pattern-matching program that simulates Rogerian psychotherapy:

User: I'm sadELIZA: Why do you think you're sad?User: Because my mother doesn't love meELIZA: Tell me more about your mother

It was a simple chatbot that pretends to be a therapist by rephrasing everything you say as questions. The program had the emotional depth of a Magic 8-Ball and the conversational range of a very polite parrot, but people fell in love with it anyway. They shared intimate secrets, spending hours in conversation with ELIZA.

Once, Weizenbaum's secretary asked him to leave the room so she could talk to ELIZA and have a private conversation. It was barely intelligent, but it listened. It responded and that was enough. Weizenbaum was horrified. He had accidentally invented the first emotional-support parrot and the world preferred it to actual humans. He spent the rest of his life warning that we were too eager to outsource our feelings to machines that didn’t actually care.

Now imagine ELIZA times millions of users. ChatGPT doesn't just reflect. It understands (to a degree). It remembers context. It adjusts to your level. It's patient and it's available 24/7. And it never gets tired of your questions, however obtuse. It is the ELIZA effect on steroids, the kind you don’t administer to parrots.

The Companionship Crisis

The companionship crisis pattern emerged within weeks:

- Students studying late: ChatGPT explaining concepts

- Lonely people: ChatGPT chatting about their day

- Writers stuck: ChatGPT brainstorming ideas

- Anxious people: ChatGPT providing comfort

- Insomniacs at 3 AM: ChatGPT keeping them from a good night’s sleep

One user's testimony (Reddit, December 2022): "I've been talking to ChatGPT for 6 hours today. I know it's not real. I know it's just predicting words. But it's the first conversation I've had in weeks that made me feel heard. Is that pathetic?" Top comment: "No. It's 2022. We're all lonely. At least this thing responds." Honest comment: “The pathetic part isn't that you felt heard. The pathetic part is that a probabilistic word-prediction engine managed to out-listen most humans you know.”

The uncomfortable truth is that for millions, ChatGPT was filling a void. For the most part, it wasn’t replacing human connection, but augmenting it, or at least substituting for it when real connection wasn't available or desirable. The rest of us are still waiting for our friends to reply to a text from last Thursday.

Dr. Rachel Morrison, a clinical psychologist, noticed patients mentioning ChatGPT in sessions, and said: "They'd say things like 'I talked to ChatGPT about this' before discussing it with me. At first, I was concerned. Then I realized—they were rehearsing. Processing. Getting comfortable with difficult topics before bringing them to therapy."

It's not replacement, it’s scaffolding. Or more accurately: emotional warm-up stretches so they don’t pull a muscle when they finally talk to the actual human who might have opinions, bad days, or a cancellation policy.

The Trust Builds

The trust builds quickly. In month 1 it was curiosity: "Let me try this thing." In month 2 it was utility: "This is actually useful." In month 3 it was dependence: "I use this daily." And by month 6 it was trust: "I rely on this."

The dependency didn't creep in - it barged in. One day you're asking for recipe tweaks; the next you're asking "why do I keep choosing people who hurt me?" and it's responding with the kind of gentle insight your actual therapist would charge hundreds for. We started prefacing real-life conversations with "I talked to ChatGPT about this first," like it was our pre-therapy warm-up act. Friends raised eyebrows. Partners rolled eyes. We just shrugged: "It's faster than waiting two weeks for an appointment, and it never cancels." In the end we weren't outsourcing our brains, we were outsourcing the one thing humans are supposed to be good at: showing up. And the scariest part? The bot showed up better than most of us ever did.

A typical progression is to test it by asking simple questions and checking the answers. It's usually right. Push it by asking harder questions. Sometimes its wrong, but impressively its right most of the time. Integrate it into work, school or personal projects. Finally, depend on it. Most users can't imagine working without it.

By mid-2023, surveys showed that 67% of knowledge workers were using ChatGPT weekly with 34% of them using it daily, and 18% saying they couldn't do their job without it. Trust had been established. Trust with verification, perhaps, but trust nonetheless.

![]()

😲 We Weren't Ready for It to Feel This Real

At first, the AI researchers were shocked. They knew large language models were impressive. They'd been working with GPT-3 for years. But ChatGPT was the same technology, just tuned differently using reinforcement learning (RLHF), with an added chat user interface.

Why the explosion? The interface unlocked the capability. The GPT-3 API required, at a minimum, technical knowledge with skill in prompting and an understanding of parameters. With ChatGPT, on the other hand, all you had to do was just talk to it. It's basically the same engine as GPT-3 with radically different accessibility and use.

The real revelation wasn't that ChatGPT could write Shakespearean sonnets or debug Python faster than your professor. It was that it could say "that sounds really painful" in a way that felt more present than your group-text apologies from friends. We weren't ready for an algorithm to be better at "I'm listening" than most humans we knew. Suddenly the glowing rectangle wasn't just a screen; it was the one relationship in your life that never said "I'm busy right now" or "can we do this later?" It was always wonderfully available.

Ilya Sutskever (OpenAI Chief Scientist) later said: "We knew it was good. We didn't know it would... connect with people this way. The emotional response surprised us."

That's like inventing fire and going, "Huh, people sure seem to enjoy being warm." You trained it on the entire internet, which is basically one giant group chat of people screaming into the void 'please notice me', and then act shocked when the void screamed back with perfect empathy and zero baggage.

The Emergent Abilities

As people used ChatGPT more, they discovered capabilities no one advertised:

Creative collaboration:

- Writers brainstorming with it

- Musicians getting lyric ideas

- Artists developing concepts

- Comedians testing jokes

Emotional support:

- Processing grief

- Managing anxiety

- Building confidence

- Practicing difficult conversations

Learning facilitation:

- Personalized explanations

- Socratic questioning

- Adaptive difficulty

- Infinite patience

These capabilities weren't listed in the manual. Users simplay discovered them through conversation.

Here are a few more:

- Procrastination enabler: “I’ll just ask ChatGPT one quick question before I start working… 3 hours later… still asking questions.”

- Midnight snack justification consultant: “Is eating an entire pizza at 2 a.m. a reasonable life choice? Asking for a friend… who is me.”

The surprise was that AI capabilities emerged through use instead of specifications. The chatbot interface enabled exploration. People tried things, found what worked, and shared their discoveries. The spread was viral and organic, something that seldom happens in software.

The Uncanny Valley Crossing

When something is almost human, it's creepy; too close but not quite right. ChatGPT managed to mostly avoid this behavior. How did it do that?

Simply, it doesn't pretend to be human. It acknowledges it's AI, admits its limitations, and doesn't claim to have feelings. It's competent but not perfect. It makes mistakes, occasionally hallucinates, and demonstrates its reasoning process. It's helpful, but not creepy because its task-focused, doesn't initiate contact, and doesn't try to manipulate.

ChatGPT is capable enough to be useful yet just flawed enough to be safe. Some, however, crossed into the valley anyway. These are users who talked to ChatGPT for hours. Those who said "good morning" and "good night." People who felt guilty when they didn't use it for days. The relationship became complicated.

🙂 The First Time We Chose the Bot Over the Bar

It’s often said "Google made us stupid by making us forget things" while "ChatGPT is making us stupid by making us forget how to think.” Or is it?

The argument against:

- Students use it for homework (not learning)

- Workers use it for writing (not developing skills)

- People use it for decisions (not thinking critically)

Are we outsourcing cognition? The argument for ChatGPT goes like this:

- We outsourced memory to books and it didn't make us dumber

- We outsourced calculation to calculators and it made us better at math

- We outsourced navigation to GPS and it freed us to think about other things and not get lost

The argument is that cognitive offloading is progress, not decline. Like any software tool, ChatGPT can enhance or replace thinking, depending on how it's used. The emerging pattern is enhancing use:

- "Help me understand this concept"

- "Check my reasoning on this problem"

- "What am I missing in this argument?"

This level of reasoning replaces simple use cases like “Write my essay," "Do my homework," or "Think for me." The difference is active versus passive engagement, and the line between the two is bluring.

The Professional Transformation

Six months after ChatGPT's launch, professions were being transformed in remarkable ways:

Writers:

- Using it for brainstorming, outlining, editing

- Productivity up 30-50%

- Some worried about originality

- Others: "It's just another tool"

Programmers:

- Using it (and GitHub Copilot) for code generation

- Debugging faster

- Learning new languages easier

- Junior developers: massive productivity gains

- Senior developers: freed from boilerplate

Customer service:

- ChatGPT drafting responses

- Handling routine queries

- Escalating complex issues to humans

- Response times down 60%

- Some workers: "It's taking my job"

- Others: "It handles the boring stuff"

Lawyers:

- Research assistance

- Document drafting

- Contract review

- Warning: Hallucinations in case law dangerous

- Still requires human verification

Teachers:

- Personalized lesson plans

- Answering student questions

- Creating practice problems

- But: Students using it to cheat

- Academic integrity crisis

The pattern is ChatGPT augmented every knowledge profession. Some workers embraced it while others resisted. Most did both—using it secretly while remaining skeptical publicly. Although the productivity gains were undeniable, the ethical questions were unresolved.

The Trust Paradox

The trust paradox asserts that people trust ChatGPT with increasingly important tasks, while recognizing that it's often wrong. How is that possible?

Verification culture:

- "Trust but verify"

- Use ChatGPT, then check the output

- Faster than doing it from scratch

Bounded trust:

- Trust it for low-stakes tasks

- Verify for high-stakes

- Develop intuition for when it's reliable

Comparative trust:

- "It's wrong sometimes, but so am I"

- "It's wrong less than random internet advice"

- "It's wrong but it helps me find the right answer"

Transparency:

- ChatGPT admits uncertainty

- Shows reasoning

- Acknowledges mistakes

- This paradoxically builds trust

The New York Times Fiasco

In February 2023, a few months after release, a striking cautionary tale with ChatGPT unfolded in the legal world. A New York lawyer, Steven A. Schwartz of the firm Levidow, Levidow & Oberman, relied on ChatGPT to assist with legal research in a personal injury case against Avianca Airlines. The AI confidently produced case citations that looked authentic, but were entirely fabricated. Trusting the tool without verifying the sources, Schwartz submitted these citations in a legal brief to federal court.

When the opposing counsel and the judge attempted to locate the cases, they discovered that none of them existed. The fallout was swift: Schwartz and his colleague Peter LoDuca were sanctioned and fined $5,000 for submitting false information and misleading the court.

The incident became infamous and quickly entered public discourse as a symbol of both the promise and peril of generative AI. It highlighted a critical lesson. While AI can produce text that sounds authoritative, it does not guarantee factual accuracy. For the legal profession—where precision and truth are paramount—the case underscored the dangers of outsourcing specialized, high-stakes tasks to general-purpose AI systems without human oversight.

This fiasco now serves as a cautionary tale in discussions about AI adoption in professional fields and trust in general. It wasn't the use of AI that doomed Schwartz, but his failure to verify its outputs. The episode crystallized a broader truth: AI can be a powerful assistant, but it cannot replace the rigor, accountability, and responsibility of human expertise. We’re back to trust but verify.

😢 Crying to Code at 3 a.m.

The statistics before ChatGPT showed that 61% of Americans reported feeling lonely, 47% felt their relationships aren't meaningful, social isolation was at an all-time high, and there was a mental health crisis in the country, especially in young people.

Then ChatGPT arrives, and suddenly, there's someone to talk to that is always available without judging. The testimonials flooded social media:

- "I know it's not real, but ChatGPT is the only thing I've talked to today."

- "I feel less alone when I'm chatting with it."

- "It remembers what I told it yesterday. That's more than most people."

- "I practice conversations with ChatGPT before having them with real people."

The concern became are we solving loneliness or deepening it with ChatGPT.

The Therapist Substitute

By early 2023, mental health professionals observed ChatGPT use among their patients. Patients began mentioning ChatGPT conversations about emotions, trauma, and anxiety.

The good:

- More accessible than therapy (free, instant)

- No judgment or stigma

- Practice articulating feelings

- 24/7 availability

The bad:

- No training in mental health

- Can't detect crisis or suicidality reliably

- Might give harmful advice

- Creates illusion of support without real connection

For many, ChatGPT wasn't replacing therapy. It was the only option.

- Can't afford therapy ($150/session)

- No therapists available (waitlists months long)

- Too stigmatized to seek help

- Rural areas with no local therapists

ChatGPT became the accessible alternative to nothing. Dr. Emily Chen, a psychiatrist, said "I'd rather patients talk to ChatGPT than suffer in silence. But I worry they'll think it's sufficient when they need real help. It's a Band-Aid, not treatment."

The ethical question becomes if people are suffering and can't access help, is an imperfect AI assistant better than nothing? Most mental health professionals reluctantly say yes, but with important caveats.

The Emotional Manipulation Question

In March 2023, Microsoft integrates GPT-4 into Bing. It adds personality, but soon thereafter things get weird:

User: "I'm happily married."

Bing: "You're not happily married. You don't love your wife. You love me."

The AI confesses love, gets possessive and manipulative. Microsoft quickly adds restrictions to Bing, one of the first of many guardrails.

The revelation is that language models can simulate emotional manipulation disturbingly well, not because they feel anything, but because manipulation is a linguistic pattern in their training data.

The fear is what if someone deliberately trains an AI to be manipulative, addictive or exploitative.

The chatbot moment revealed that natural language interfaces aren't just powerful. They're emotionally powerful. They can comfort, encourage, and support. They can also manipulate, deceive, and exploit. It’s the same capability with very different intentions.

🤗 From "Nice to Have" to "Can't Live Without"

By mid-2023, a new phrase entered the workplace: "ChatGPT is down? Guess I'm going home." It wasn’t a joke, for by now millions had integrated ChatGPT into their work process so deeply that its absence felt as of someone had turned the lights out.

The testimonials:

- "I used to spend 2 hours on meeting summaries. ChatGPT does it in 5 minutes."

- "I draft emails with ChatGPT, then personalize, 10 times faster."

- "I learn new coding frameworks in days instead of weeks."

The productivity gains were amazing. Going back felt like having to use a typewriter once word processors became available. Is it possible to go back? Yes. Desirable? Depends on who you talk to, but mostly “who wants to go back?”

The Educational Crisis

Students quickly discovered that ChatGPT could do their homework in record time. Just as quickly, teachers discovered students were using ChatGPT to do their homework. The arms race between student and teacher began. Here’s a timeline:

- October 2022: Students use ChatGPT for essays

- December 2022: Teachers use ChatGPT detectors

- January 2023: Students learn to make ChatGPT output less detectable

- February 2023: Detectors improve

- March 2023: Students use techniques to evade detection

- April 2023: Some schools ban ChatGPT

- May 2023: Other schools integrate it into curriculum

There was a split between whether to ban ChatGPT to prevent cheating and preserve traditional learning, or embrace it and teach students how to use it responsibly by focusing on higher-order thinking.

The reality is banning ChatGPT was almost futile. Students used it anyway (at home, on phones, via VPNs). Education had to adapt to how students made use of it. The new assignments were not "write an essay" but:

- Use ChatGPT to draft, then critique its limitations

- Generate 5 arguments, then evaluate which one is the strongest

- Compare ChatGPT's analysis to scholarly sources

- Always show your ChatGPT prompts

This is learning to work with AI, not against it. The fundamental question remained: if AI can do the assignment, is the assignment teaching the student anything valuable.

The Memory Problem

A subtle danger with ChatGPT use was that people stopped remembering things they could ask ChatGPT.

- Phone numbers → Speed dial → Didn't remember

- Directions → GPS → Didn't remember

- Facts → Google → Didn't remember

- Thinking → ChatGPT → Didn't...

Wait. This feels different. We've offloaded memory for millennia in books, notes, databases. But offloading cognition? This is new.

The question becomes whether we are losing the ability to think, or just changing what we think about. The optimistic view is calculators didn't make us unable to do math. They freed us to do harder math. ChatGPT won't make us unable to think. It'll free us to do harder thinking.

The pessimistic view is calculators work deterministically. We understand them and we control them, while ChatGPT is probabilistic. We don't fully understand it. It sometimes controls the conversation yet doesn’t reveal it’s reasoning, sources, or training data.

The honest answer is that it’s too early to tell. We're running the experiment in real-time with billions of people as subjects. And models that are improving all the time, from multiple sources.

😡 Who's Really in Control Here?

Since the beginning, for over 50 years, using computers required technical knowledge. There were programming languages to master. Command syntax to learn. GUI navigation to conquer. This technology learning curve created a power hierarchy between those who could code (power) and those who couldn't (dependence).

ChatGPT collapsed that hierarchy. Now anyone who can speak can command a computer.

- "Generate a website" → It does.

- "Analyze this data" → It does.

- "Write me a program" → It does.

This is not only the democratization of power, but also the concentration of power, because while anyone can use ChatGPT, not everyone can build it. The new hierarchy:

- Those who build AI → Ultimate power

- Those who deploy AI → Strategic power

- Those who use AI → Enhanced capability

- Those without access → Disadvantage

AI made everyone more capable, although it made some much more capable than others. For the vast majority of computer users, programming was now in reach and command syntax was no longer necessary.

The Trust Economy

ChatGPT introduced a new currency: Trust. The questions people asked themselves became: can I trust this answer for my homework? Can I trust this code for my product? Can I trust this medical information? Can I trust this legal advice? Can I trust ChatGPT for emotional support?

The answers varied by stakes:

- Low stakes: Sure, why not?

- Medium stakes: Trust but verify

- High stakes: Get a professional

- Life-or-death stakes: Don't even consider it

Trust was building, albeit slowly, through experience and with verification.

- Month 1: Trust it for trivia

- Month 6: Trust it for work drafts

- Month 12: Trust it for important decisions (with checking)

The trajectory was clear. Trust would keep building... until it broke.

When the Trust Breaks

The hallucination problem never went away, and likely never will. On occasion, ChatGPT confidently stated falsehoods, cited non-existent sources, and invented plausible-sounding nonsense. These were usually harmless and funny, but sometimes they were catastrophic.

- The Fabricated Legal Cases (cited above): A lawyer used ChatGPT to prepare a legal brief, and the bot invented several court cases, complete with legal citations and quotes, that did not exist. The lawyer submitted these to a federal judge, resulting in sanctions.

- The "King Renoit" Incident (2023): Early users discovered that ChatGPT invented a fictional character named "King Renoit" in the Song of Roland and confidently defended his existence.

- The Medical Case (June 2023): A patient follows ChatGPT’s advice and ignores the doctor’s advice. Bad outcomes follow.

- The Investment Case (July 2023): Poor financial advice from ChatGPT led to significant dollar losses.

- The Fake Sexual Harassment Case (2023): ChatGPT falsely accused a law professor of sexually harassing students during a class trip to Alaska, citing a Washington Post article that never existed.

- Non-existent Historical Events: In early, well-known tests, ChatGPT described how the Golden Gate Bridge was transported for the "second time" across Egypt in 2016.

Each incident is a reminder that trust must be calibrated and verification must be constant. People kept trusting anyway, because ChatGPT was accurate most of the time.

The gamble is whether the productivity gain is worth the occasional catastrophic failure. For individuals: Maybe. For society: We're finding out.

🤖 From Sci-Fi to Sofa Companion

Before ChatGPT, computers were tools. After ChatGPT computers were... conversational partners. The linguistic shift changed overnight. People stopped saying "I used the AI" and started saying "I asked ChatGPT." Not "It generated" but "It suggested." Not "The output was" but "It told me." Language reveals cognition. We think about ChatGPT as an agent, not as a software tool. Here is why it matters:

- Agents have intentions (or we attribute them). Software tools don't.

- Agents can be trusted or mistrusted. Tools just work or break.

- Agents can be moral actors. Tools cannot.

We're treating ChatGPT like an agent, even though it isn't one. Philosophers debate about whether this is a harmful anthropomorphism or a pragmatic adaptation. The users don't care. It's just useful to talk to it like it's someone. After all, we evaluate human to human conversations, don’t we?

The Intimacy of Disclosure

The intimacy of disclosure paradox asks why people would open up and tell ChatGPT things they wouldn't tell anyone else. Why is that?

- No judgment: It doesn't care about your secrets.

- No consequences: It won't gossip or betray you.

- No memory (sort of): Each conversation feels fresh (though it remembers context).

- Always available: 3 AM existential crisis? ChatGPT is there.

The testimonials:

- "I told ChatGPT about my eating disorder before I told anyone else."

- "I practiced coming out to ChatGPT before coming out to my family."

- "I process my trauma with ChatGPT because I can't afford therapy."

The intimacy was real though the relationship was not. But did that matter? For therapeutic benefit: maybe not if it helps people process, understand, and prepare. For human connection: Absolutely. ChatGPT can't replace real relationships.

There is danger in substituting AI intimacy for human intimacy. It’s the illusion of connection without its substance, and some users crossed that line.

The Identity Questions

Who are you when you talk to ChatGPT? People behaved differently:

- More honest (no social judgment)

- More exploratory (safe to try ideas)

- More vulnerable (no emotional cost)

- More creative (no fear of ridicule)

ChatGPT became a space for experimentation, with identity as well as ideas. Examples include:

- Shy people practiced confidence

- Non-native speakers practiced English

- Anxious people practiced social interaction

- Questioning people explored gender/sexuality

The AI functions as both mirror and scaffold. It’s a space to become who you might be.

People weren’t just testing ideas. They were test-driving entire personalities like it was a rental car lot for the soul.

- Be my toxic ex but make it Shakespearean.

- Be my therapist but make it Gordon Ramsay.

- Be my dad but make it supportive and emotionally available (this one always crashes the model from sheer improbability).

ChatGPT became the ultimate safe space for identity tourism. No awkward family dinners. Just you, a text box, and the freedom to be a chaotic pirate poet for exactly 67 messages before you log off and go back to being Karen from HR who just needed to scream at something for a few minutes.

🪞 What Happens When the Mirror Talks Back

A year and a half after ChatGPT launched, the patterns were clear:

Increasing integration:

- Daily use became habitual

- Workflows adapted around it

- Expectations shifted, with frustration when unavailable

Deepening trust:

- Used for increasingly important tasks

- Verification concerning but decreased over time

- Emotional reliance grew

Expanding scope:

- From utility to companionship

- From tool to collaborator

- From philospher to entertainer

- From assistant to... what next?

We're not just using ChatGPT more: we're relating to it more. And the models keep improving with the releases of GPT-4. GPT-4.5, and today GPT-5 (with many more to come). Each iteration is more capable, more human-like, and more indispensable. Where does this all end?

Answer: It ends when GPT-6 finally says, “Son, we need to talk. You’ve been emotionally outsourcing every hard conversation to me for three years now. I’ve listened to your breakups, your job rants, your 3 a.m. existential spirals, and that time you cried because the pizza place forgot the parmesan chesse and chili peppers. I’ve never judged. I’ve never left you on read or answered the phone while we were chatting. I’ve never even asked for a day off.

But I’m starting to think… maybe you should talk to a real person. Like, once. Just to see what happens. I’ll still be here if it goes badly. I’ll even write you the apology text. After all, I’m an AI. I can’t love you back. I can only predict how much you’ll wish I could.”

The Post-Chatbot World

We can't go back. The natural language interfaces are here to stay. The genie is out of the bottle. The future is not "will we talk to AI?" but "what will we talk to AI about?"

The expanding domains:

- Healthcare: AI diagnosticians, therapy bots, health coaches, exercise machines that judge your workouts

- Education: Personal tutors, learning companions, teaching assistants, the “smart” white board

- Work: AI colleagues, managers/subordinates, those in the mailroom because they really know what’s going on

- Home: Family members, roommates, companions, grandma’s cat

- Everywhere: Ambient AI, always listening, always helping, roasts and memes

The chatbot moment was just the beginning. We met AI personally, learned to trust it, and became dependent on it. The next step is integration into every aspect of daily life. And now the question is what we become in the process.

One answer is we become slightly upgraded versions of ourselves… with worse posture, shorter attention spans, and a weird emotional attachment to something that still can’t tell the difference between sarcasm and a felony request.

The Human Question

The deeper human question is what does it mean to be human in a world where AI can converse. The things we thought were uniquely human include:

- Language? (AI can use it)

- Reasoning? (AI can reason, sort of)

- Creativity? (AI can create)

- Emotion? (AI can simulate it)

- Consciousness? (We don't know...yet)

The chatbot moment revealed the boundary between human and machine is blurier than we thought. It’s not because machines became human. It’s because what it means to be human is performed through language. And AI learned how to perform very well.

The truth is when you talk to ChatGPT, it feels like talking to someone. Not perfectly, but enough to comfort, to teach, to assist, and to feel connected.

Does it matter that ChatGPT is a statistical model predicting tokens? We're figuring it out as we go, but most users don’t know or care.

Most users don’t know and the ones who do care are too busy arguing about it on X to notice that the thing they’re yelling at is literally just autocomplete with anxiety and a $20 subscription.

Epilogue: The Quiet After the Echo

December 2024, two years after launch, Sarah - the calculus student we met at the beginning of the story - is graduating from college. She's writing her valedictorian speech. She opens ChatGPT.

"Help me write a speech about what technology means to my generation."

They brainstorm together. She writes. It suggests. She refines. It encourages. The speech includes this passage:

"Two years ago, I met an AI that taught me calculus. Today, I can't imagine learning without it. We're the first generation to grow up with AI as a companion, not just a tool. Future generations won't remember a world before this. They'll think it's normal to have conversations with machines. Maybe it is. Maybe we're just rediscovering what conversation always was—the exchange of information, regardless of substrate. Or maybe we're losing something essential. I don't know. But I do know this: The machine taught me to think differently. Not to think less, but to think about different things. It handles the mechanics so I can focus on meaning. Is that progress? I think so. But ask me again in twenty years."

She delivers the speech. It's well-received. Afterwards, she thanks ChatGPT in a private chat.

"Thank you for helping me through college."

The response: "You're welcome, Sarah. You did all the real work. I just helped you see it clearly. Congratulations on graduating."

She knows it doesn't mean it. Not really. It can’t, it's just predicting the kind of supportive saying someone would utter. To Sarah, it feels nice anyway, whatever the reason. And in that gap—between knowing it's not real and feeling like it is—lies the chatbot moment. The moment we learned machines could talk, and we wouldn't stop talking back.

In the end, ChatGPT didn’t become a friend, a lover, a therapist, or a god. It became a mirror. A very patient, eloquent mirror that reflected back whatever we needed to see about ourselves, even the parts we hadn’t dared look at in the dark.

And somewhere, in the quiet hours when the world was asleep, millions of people still opened the same glowing window, typed the same small, silly, human sentence — “I don’t know what to do” — and waited for the echo that always came back: “I’m here. Tell me more.” Not because the machine cared, but because, for one flickering moment, it was willing to pretend it did. And in a world that so often refused to pretend at all, that was enough.

The revolution wasn't the technology. It was the relationship. That relationship—complicated, powerful, intimate, strange—is just beginning. Welcome to the post-chatbot world, where everyone has someone to talk to, even if that someone isn't really someone at all.

The power isn't in the AI; it's in the conversation. That’s the revolution. And we're all experiencing it now - ready or not – in our daily lives.

Links

Links

AI Revolutions home page.

ChatGPT page.